We will learn about artificial intelligence, including Weak AI and Strong AI, and the various ways thinkers have tried to define Strong AI. We will also consider the Turing Test and John Searle’s response to the Turing Test, the Chinese Room.

Hank explores artificial intelligence, including weak AI and strong AI, and the various ways that thinkers have tried to define strong AI including the Turing Test, and John Searle’s response to the Turing Test, the Chinese Room. Hank also tries to figure out one of the more personally daunting questions yet: is his brother John a robot?

You might be thinking, “Don’t we have artificial intelligence already, like on my phone?” Well, yeah, but the kind of AI that we use to send our texts and proofread our emails and plot our commutes to work is pretty weak in the technical sense. A machine or system that mimics some aspect of human intelligence is known as weak AI. Siri is a good example, but similar technology has been around a lot longer than that. Autocorrect, spell check, even old school calculators are capable of mimicking portions of human intelligence. Weak AI is characterized by its relatively narrow range of thought-like abilities. Strong AI, on the other hand, is a machine or system that actually thinks like us, whatever it is that our brains do, Strong AI is an inorganic system that does the same thing. While Weak AI has been around for a long time and keeps getting stronger, we have yet to design a system with strong AI.

But what would it mean for something to have strong AI? Would we even know when it happened? Way back in 1950, British mathematician Alan Turing was thinking about this very question, and he devised a test called the Turing Test, that he thought would be able to demonstrate when a machine had developed the ability to think like us. Turing’s description of the test was a product of its time, a time in which there were really no computers to speak of, but if Turing were describing it today, it would probably go something like this: You’re having a conversation via text with two individuals, one is a human and the other is a computer or AI of some kind, and you aren’t told which is which. You might ask both of your interlocutors anything you would like, and they are free to answer however they would like. They can even lie. Do you think you’d be able to tell which one was the human? How would you tell? What sort of questions would you ask? And what kind of answers would you expect back? A machine with complex enough programming ought to be able to fool you into believing you’re conversing with another human, and Turing said if a machine can fool a human into thinking it’s a human, then it has strong AI. So in his view, all it means for something to think like us is for it to be able to convince us that it’s thinking like us. If we can’t tell the difference, there really is no difference. It’s a strictly behavior-based test and if you think about it, isn’t behavior really the standard we use to judge each other? I mean, really, I could be a robot, so could these guys who are helping me shoot this episode. The reason I don’t think I’m working with a bunch of androids is that they act the way that I have come to expect people to act. At least, most of the time. And when we see someone displaying behaviors that seem a lot like ours, displaying things like intentionality and understanding, we assume that they have intentionality and understanding.

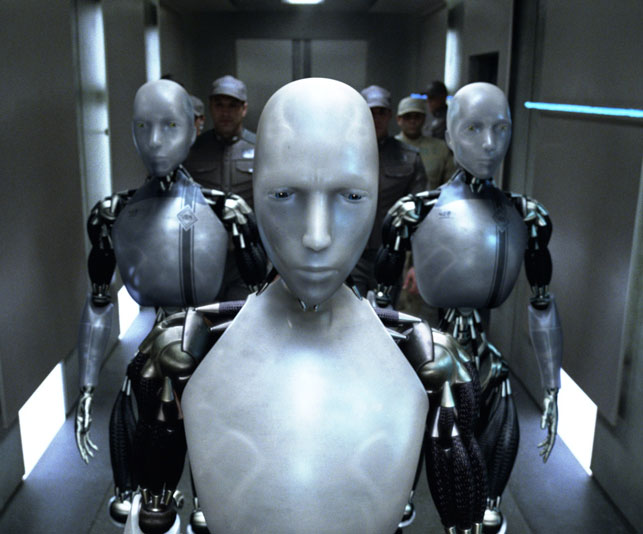

Now, fast forward a few decades and meet contemporary American philosopher William Lycan. He agrees with Turing on many points and has the benefit of living in a time when artificial intelligence has advanced like crazy, but Lycan recognizes that a lot of people still think that you can make a person-like robot, but you could never actually make a robot that’s a person, and for those people, Lycan would offer up this guy for consideration: Harry.

Harry is a humanoid robot with lifelike skin. He can play golf and the viola, he gets nervous, he makes love, he has a weakness for expensive gin. Harry, like John Green, gives every impression of being a person. He has intentions and emotions. You consider him to be your friend. So if Harry gets a cut and then motor oil rather than blood spills out, you would certainly be surprised. But Lycan says this revelation shouldn’t cause you to downgrade Harry’s cognitive state from person to person-like. If you would argue that Harry’s not a person, then what’s he missing?

One possible answer is that he’s not a person because he was programmed. Lycan’s response to that is well, weren’t we all? Each of us came loaded with a genetic code that predisposed us to all sorts of different things. You might have a short fuse like your mom or a dry sense of humor like your grandfather, and in addition to the coding you had at birth, you were programmed in all sorts of other ways by your parents and teachers, you were programmed to use a toilet, silverware, to speak English rather than Portuguese, unless, of course, you speak Portuguese, but if you do, you were still programmed. And what do you think I’m doing to you right now? I’m programming you. Sure, you have the ability to go beyond your programming, but so does Harry. That’s Lycan’s point.

Now, another distinction you might make between persons like us and Harry is that we have souls and Harry doesn’t. Now, you’ve probably seen enough Crash Course: Philosophy by now to know how problematic this argument is, but let’s suppose there is a God and let’s suppose that he gave each of us a soul. We, of course, have no idea what the process of ensoulment might look like, but suffice it to say, if God can zap a soul into a fertilized egg or a newborn baby, there’s no real reason to suppose he couldn’t zap one into Harry as well. Harry can’t reproduce, but neither can plenty of humans, and we don’t call them non-persons. He doesn’t have blood, but really, do you think that that’s the thing that makes you, you?

Lycan says Harry’s a person. His origin and material constitution are different than yours and mine, but who cares? After all, there have been times and places in which having a different color of skin or different sex organs has caused someone to be labeled a non-person, but we know that that kind of thinking doesn’t hold up to scrutiny.

Back in 1950, Turing knew no machine could pass his test, but he thought it would happen by the year 2000. It turns out, though, that because we can think outside of our programming in ways that computer programs can’t, it’s been really hard to design a program that can pass the Turing test. But what will happen when something can?

Many argue that even if a machine does pass the Turing test, that doesn’t tell us that it actually has Strong AI. These objectors argue that there’s more to thinking like us than simply being able to fool us. Let’s head over to the Thought Bubble for some flash philosophy.

Contemporary American philosopher John Searle constructed a famous thought experiment called the Chinese Room designed to show that passing for human isn’t sufficient to qualify for Strong AI. Imagine you’re a person who speaks no Chinese. You’re locked in a room with boxes filled with Chinese characters and a codebook in English with instructions about what characters to use in response to what input. Native Chinese speakers pass written messages in Chinese into the room. Using the codebook, you figure out how to respond to the characters you receive and you pass out the appropriate characters in return. You have no idea what any of it means, but you successfully follow the code. You do this so well in fact, that the native Chinese speakers believe you know Chinese. You’ve passed the Chinese speaking Turing test, but do you know Chinese? Of course not. You just know how to manipulate symbols, with no understanding of what they mean in a way that fools people into thinking you know something you don’t. Likewise, according to Searle, the fact that a machine can fool someone into thinking it’s a person doesn’t mean it has Strong AI. Searle argues that Strong AI would require the machine have actual understanding, which he thinks is impossible for a computer to ever achieve.

One more point before we get out of here. Some people have responded to the Chinese Room thought experiment by saying, sure, you don’t know Chinese, but no particular region of your brain knows English either. The whole system that is your brain knows English. Likewise, the whole system that is the Chinese Room, you, the codebook, the symbols, together know Chinese, even though the particular piece of the system that is you does not.